2. Product description

2.1. IPU-M2000 in IPU-POD systems

Graphcore’s IPU-M2000 IPU-Machine is designed to support scale-up and scale-out machine intelligence compute. The IPU-POD reference designs, based on the IPU-M2000, deliver scalable building blocks for IPU-POD systems.

Virtualization and provisioning software allow the AI compute resources to be elastically allocated to users and be grouped for both model-parallel and data-parallel AI compute in all IPU-POD systems, supporting multiple users and mixed workloads as well as single systems for large models.

IPU-POD system level products, including IPU-M2000 machines, host servers and network switches, are available from Graphcore channel partners globally. Customers can select their preferred server brand from a range of leading server vendors. There are multiple host servers from different vendors approved for use in IPU-POD systems, see the approved server list for details. The disaggregated host architecture allows for different server requirements based on workload.

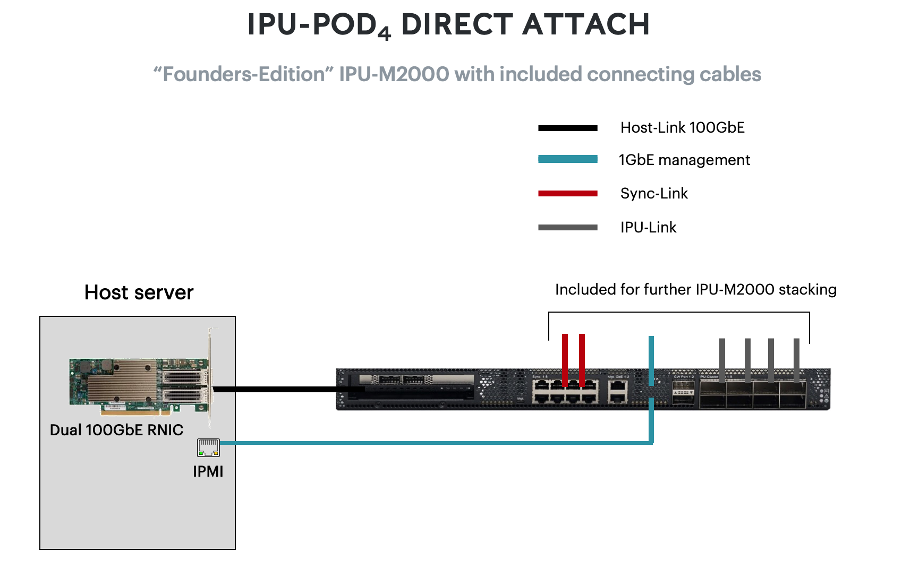

The “Founder’s Edition” IPU-M2000 comes complete with all the cables required for installation in IPU-POD systems.

2.2. IPU‑POD4 Direct Attach

The single IPU-M2000 blade in an IPU‑POD4 Direct Attach system is pre-configured with Virtual-IPU (V-IPU) management software ensuring easy installation and integration with pre-existing infrastructure. The host server required to run the Poplar SDK is not included. In this datasheet we use the Dell R6525 server with dual-socket AMD Epyc2 CPUs as the default offering. Default number of servers is 1, however up to 4 host servers can be required depending on workload - please speak to Graphcore sales.

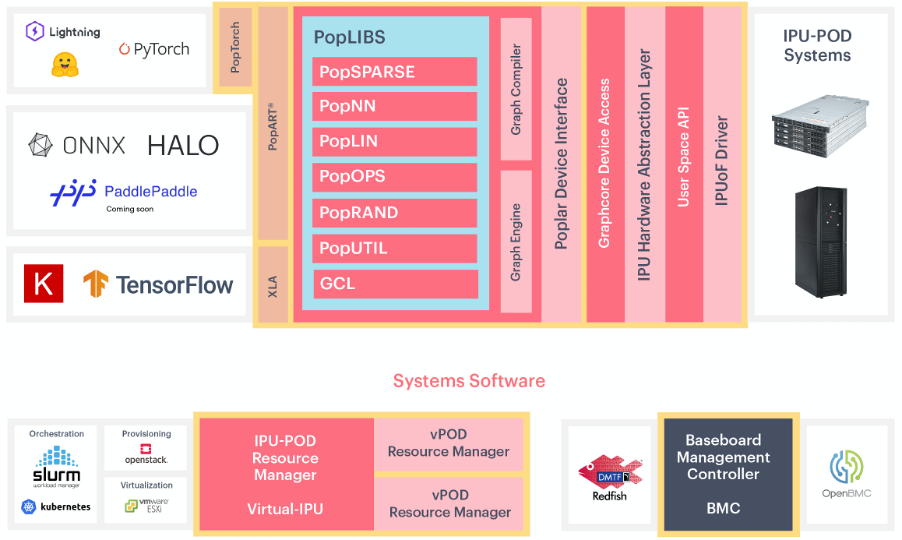

2.3. Software

IPU-POD systems are fully supported by Graphcore’s Poplar® software development environment, providing a complete and mature platform for ML development and deployment. Standard ML frameworks including TensorFlow, Keras, ONNX, Halo, PaddlePaddle, HuggingFace, PyTorch and PyTorch Lightning are fully supported along with access to PopLibs through our Poplar C++ API. Note that PopLibs, PopART and TensorFlow are available as open source in the Graphcore GitHub repo https://github.com/graphcore. PopTorch provides a simple wrapper around PyTorch programs to enable the programs to run seamlessly on IPUs. The Poplar SDK also includes the PopVision™ visualisation and analysis tools which provide performance monitoring for IPUs - the graphical analysis enables detailed inspection of all processing activities.

In addition to these Poplar development tools, IPU-POD systems are enabled with software support for industry standard converged infrastructure management tools including OpenBMC, Redfish, Docker containers, and orchestration with Slurm and Kubernetes.

Fig. 2.1 IPU-POD software

Complete end-to-end software stack for developing, deploying and monitoring AI model training jobs as well as inference applications on the Graphcore IPU |

|

|---|---|

ML frameworks |

TensorFlow, Keras, PyTorch, Pytorch Lightning, HuggingFace, PaddlePaddle, Halo, and ONNX |

Deployment options |

Bare metal (Linux), VM (HyperV), containers (Docker) |

Host-Links |

RDMA based disaggregation between a host and IPU over 100Gbps RoCEv2 NIC, using the IPU over Fabric (IPUoF) protocol |

Host-to-IPU ratios supported: 1:16 up to 1:64 |

|

Graphcore Communication Library (GCL) |

IPU-optimized communication and collective library integrated with the Poplar SDK stack |

Support all-reduce (sum,max), all-gather, reduce, broadcast |

|

Scale at near linear performance to 64k IPUs |

|

PopVision |

Visualization and analysis tools |

To see a full list of supported OS, VM and container options go to the Graphcore support portal https://www.graphcore.ai/support

IPU-Fabric topology discovery and validation |

|

|---|---|

Provisioning |

gRPC and SSH/CLI for IPU allocation/de-allocation into isolated domains (vPods) |

Plug-ins for SLURM and Kubernetes (K8) |

|

Resource monitoring |

gRPC and SSH/CLI for accessing the IPU-M2000 monitoring service |

Prometheus node exporter and Grafana (visualization) support |

Baseboard Management Controller (OpenBMC) |

Dual-image firmware with local rollback support |

Console support, CLI/SSH based |

Serial-over-Lan and Redfish REST API |

2.4. Technical specifications

IPU processors |

4 GC200 IPU processors |

5,888 IPU-Cores™ with independent code execution on 35,328 worker threads |

|

AI compute |

0.999 petaFLOPS AI (FP16.16) compute |

0.2497 petaFLOPS FP32 compute |

|

Memory |

Up to ~260GB memory (3.6GB In-Processor Memory™ plus up to 256GB Streaming Memory™) |

180TB/s memory bandwidth |

|

Streaming Memory |

2 DDR4-2400 DIMM DRAM |

Options: 2x 64GB (default SKU in IPU-M2000 Founder’s Edition) or 2x 128GB (contact sales) |

|

IPU-Gateway |

1x IPU-Gateway chip with integrated Arm Cortex quad-core A-series SoC |

Internal SSD |

32GB eMMC |

1TB M.2 SSD |

|

NIC |

RoCEv2 NIC (1 PCIe G4 x16 FH¾L slot) |

Standard QSFP ports |

|

Mechanical |

1U 19inch chassis (Open Compute compliant) |

440mm (width) x 728mm (depth) x 1U (height) |

|

Weight: 16.395kg (36.14lbs) |

|

Lights-out management |

OpenBMC AST2520 |

2x1GbE RJ45 management ports |

IPU-Links |

8x IPU-Links supporting 2Tbps bi-directional bandwidth |

8x OSFP ports |

|

Switch-less scalability |

|

Up to 8 IPU-M2000s in directly connected stacked systems |

|

Up to 16 IPU-M2000s in IPU-POD systems |

|

GW-Links |

2x GW-Links (IPU-Link extension over 100GbE) |

2 QSFP28 ports |

|

Switch or switch-less scalability supporting 400Gbp bi-directional bandwidth |

|

Up to 1024 IPU-M2000s connected |

Air cooled with built-in N+1 hot-plug fan cooling system |

|

Airflow |

Mounted for airflow direction front of rack (single door, cold aisle side) to back of rack (split door, hot aisle side) |

Airflow rate |

103 CFM (measured) per IPU-M2000 |

PSU |

2x 1500 W hot-plug PSUs (standard SSI slim type 54mm) |

Input power (Vac) |

100 - 240 Vac (115 - 230 Vac nominal) |

Power cap |

1500 W with programmable power cap |

Redundancy |

1+1 redundancy |

2.5. Environmental characteristics

Operating temperature and humidity (inlet air) |

10-32C (50 to 90F) at 20%-80% RH (*) |

Operating altitude |

0 to 3,048m (0-10,000ft) (**) |

(*) Altitude less than 900m/3000ft and non-condensing environment

(**) Max. ambient temperature is de-rated by 1°C per 300m above 900m

For power caps higher than 1700W per IPU-M2000 please contact Graphcore sales for environmental guidance.

2.6. Standards compliance

EMC standards |

Emissions: FCC CFR 47, ICES-003, EN55032, EN61000-3-2, EN61000-3-3, VCCI 32-1 |

Immunity: EN55035, EN61000-4-2, EN61000-4-3, EN61000-4-4, EN61000-4-5, EN61000-4-6, EN61000-4-8, EN61000-4-11 |

|

Safety standards |

IEC62368-1 2nd Edition, IEC60950-1, UL62368-1 2nd Edition |

Certifications |

North America (FCC, UL), Europe (CE), UK (UKCA), Australia (RCM), Taiwan (BSMI), Japan (VCCI) |

South Korea (KC), China (CQC) |

|

CB-62368, CB-60950 |

|

Environmental standards |

EU 2011/65/EU RoHS Directive, XVII REACH 1907/2006, 2012/19/EU WEEE Directive |

The European Directive 2012/19/EU on Waste Electrical and Electronic Equipment (WEEE) states that these appliances should not be disposed of as part of the routine solid urban waste cycle, but collected separately in order to optimise the recovery and recycling flow of the materials they contain, while also preventing potential damage to human health and the environment arising from the presence of potentially hazardous substances.

The crossed-out bin symbol is printed on all products as a reminder, and must not be disposed of with your other household waste.

Owners of electrical and electronic equipment (EEE) should contact their local government agencies to identify local WEEE collection and treatment systems for the environmental recycling and /or disposal of their end of life computer products. For more information on proper disposal of these devices, refer to the public utility service.

2.7. Ordering information

Part number |

Description |

|---|---|

GC-ADA2-00 |

IPU-M2000 |

GC-ADA2-FE |

IPU-M2000 Founder’s Edition |

IPU-POD systems are available to order from Graphcore channel partners – see https://www.graphcore.ai/partners for details of your nearest Graphcore partner.